All Sorting Algorithms Explained with Complexity

Mr. Bambam Yadav / 1 days - 0

- 4 min read

Why Sorting Matters

Before diving into specific algorithms, it’s important to recognize why sorting is so vital. Whether you are building a school project or developing enterprise-level software, sorted data often improves performance and user experience. Search operations become faster, data becomes more readable, and certain algorithms like binary search require sorted input. Efficient sorting is at the core of database indexing, operating system scheduling, and graphics rendering.

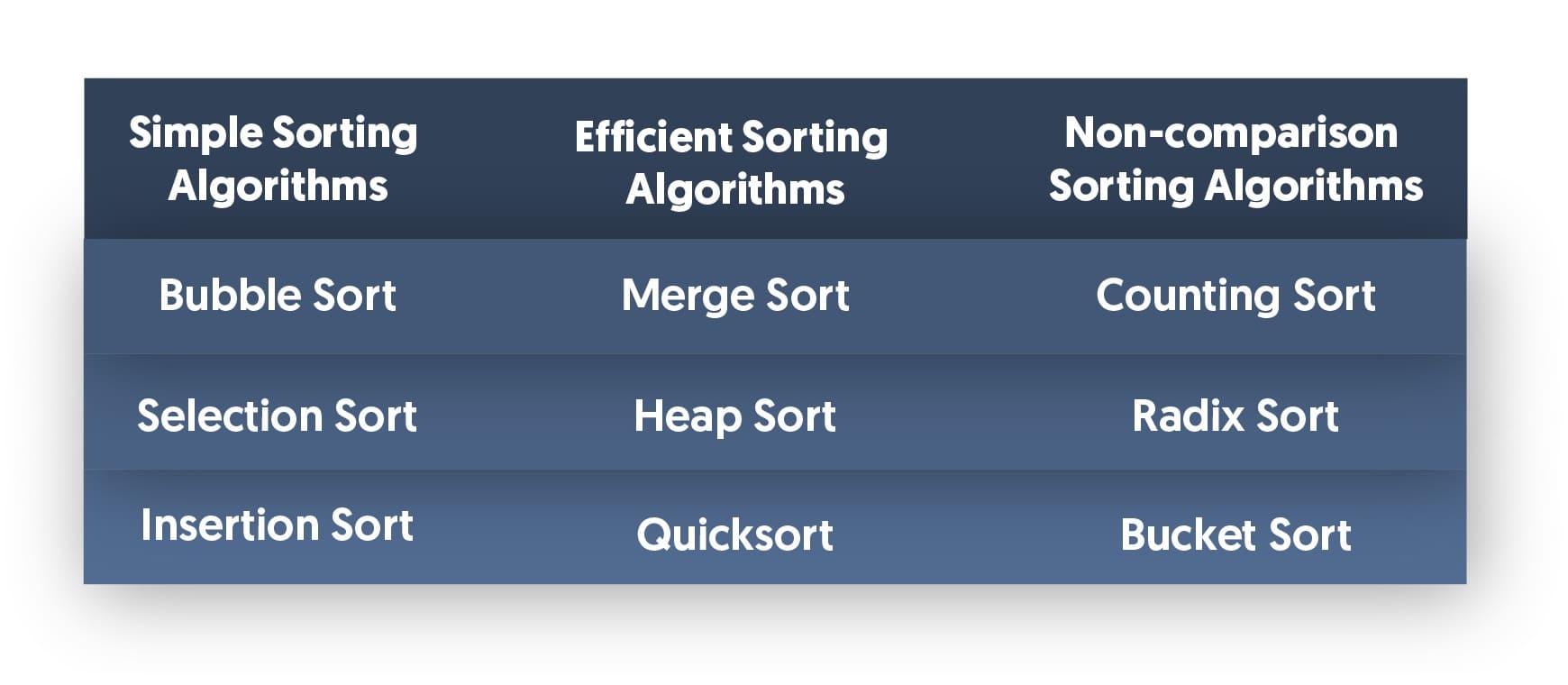

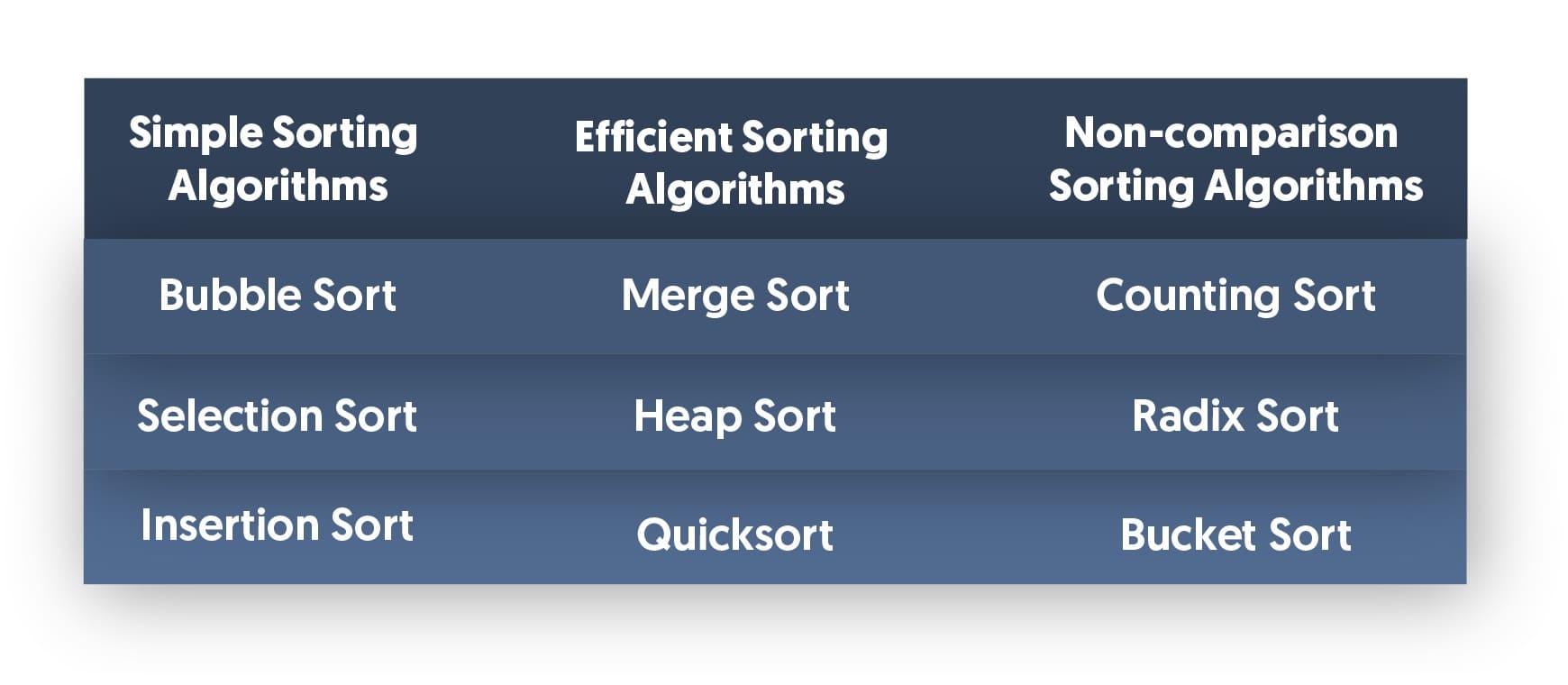

Categories of Sorting Algorithms

Sorting algorithms generally fall into two major categories:

- Comparison-Based Sorting:These algorithms compare elements to determine their order. Examples include Bubble Sort, Merge Sort, Quick Sort, and Heap Sort.

- Non-Comparison-Based Sorting:These use methods other than comparisons to sort data, such as Counting Sort and Radix Sort. They’re typically used for integers or fixed-length strings.

Let’s now look at all sorting algorithms one by one.

1. Bubble Sort

Overview:

Bubble Sort is often the first algorithm taught to beginners. It works by repeatedly stepping through the list, comparing adjacent elements, and swapping them if they are in the wrong order. This process is repeated until the array is sorted.

Time Complexity:

- Best Case: O(n) [when the array is already sorted]

- Average and Worst Case: O(n²)

Space Complexity:O(1)

Use Case:Educational; rarely used in production due to inefficiency.

2. Selection Sort

Overview:

Selection Sort selects the smallest (or largest) element from the unsorted portion of the list and places it at the beginning. It continues this process with the remaining unsorted elements.

Time Complexity:

- Best, Average, Worst Case: O(n²)

Space Complexity:O(1)

Use Case: Simple to implement but not suitable for large datasets.

3. Insertion Sort

Overview:

Insertion Sort builds the final sorted array one item at a time. It takes each element and inserts it into its proper position relative to previously sorted elements.

Time Complexity:

- Best Case: O(n) [nearly sorted input]

- Average and Worst Case: O(n²)

Space Complexity:O(1)

Use Case:Effective for small or nearly sorted datasets.

4. Merge Sort

Overview:

Merge Sort is a classic divide-and-conquer algorithm. It splits the list into halves, recursively sorts each half, and then merges the sorted halves.

Time Complexity:

- Best, Average, Worst Case: O(n log n)

Space Complexity:O(n)

Use Case:Ideal for large datasets and stable sorting needs.

5. Quick Sort

Overview:

Quick Sort is another divide-and-conquer algorithm that selects a 'pivot' and partitions the array into elements less than and greater than the pivot. It then recursively sorts the partitions.

Time Complexity:

- Best and Average Case: O(n log n)

- Worst Case: O(n²) [rare with good pivot selection]

Space Complexity: O(log n) average

Use Case:Fastest for large datasets with proper pivot strategy.

6. Heap Sort

Overview:

Heap Sort builds a max heap (or min heap) from the array and then repeatedly removes the largest (or smallest) element to build the sorted array.

Time Complexity:

- Best, Average, Worst Case: O(n log n)

Space Complexity: O(1)

Use Case:Good for large datasets when stability isn't required.

7. Counting Sort

Overview:

Counting Sort is a non-comparison algorithm suitable for sorting integers. It counts the occurrence of each value and uses that information to place elements in order.

Time Complexity:

- Best, Average, Worst Case: O(n + k), where k is the range of the input

Space Complexity:O(n + k)

Use Case: Sorting small ranges of integers quickly.

8. Radix Sort

Overview:

Radix Sort processes the digits of numbers from least significant to most significant (or vice versa), grouping by digits using counting sort as a subroutine.

Time Complexity:

- O(nk), where k is the number of digits in the maximum number

Space Complexity:O(n + k)

Use Case: Large sets of integers or strings of fixed lengths.

9. Bucket Sort

Overview:

Bucket Sort distributes elements into several buckets, sorts each bucket individually (often using insertion sort), and then concatenates the sorted buckets.

Time Complexity:

- Best Case: O(n + k)

- Worst Case: O(n²)

Space Complexity:O(n + k)

Use Case:Uniformly distributed floating-point numbers.

Choosing the Right Sorting Algorithm

Sorting isn’t one-size-fits-all. The right algorithm depends on the nature of the dataset, the required speed, and constraints like memory usage or stability. Here’s a conversational take to help clarify when to use which:

- Got a small or nearly sorted list? Insertion Sort might just do the trick.

- Sorting large files? Merge Sort’s your dependable ally.

- Need speed with low overhead? Quick Sort is the go-to—just pick a good pivot!

- Sorting numbers in a narrow range? Counting Sort and Radix Sort shine here.

That’s why students in a C Programming Course in Noida are encouraged to not just learn the syntax of these algorithms but to deeply understand their mechanics and suitable contexts.

Visualizing Sorting with Real-World Examples

Imagine organizing a library shelf:

- Bubble Sort would be like repeatedly walking along the shelf, swapping misplaced books until no more swaps are needed.

- Selection Sort is like scanning the whole shelf, picking the smallest book, and putting it in the right place.

- Insertion Sort would mean picking one book at a time and inserting it where it fits among already sorted books.

- Merge Sort involves dividing books into piles, sorting each pile, and merging the piles back in order.

- Quick Sort would pick one book (pivot), place all smaller books on the left, larger on the right, and then repeat this for each side.

These analogies help bridge theoretical understanding with intuitive learning.

Stability in Sorting Algorithms

A sorting algorithm is considered stable if it preserves the relative order of equal elements. This becomes crucial when the data being sorted has multiple fields.

- Stable Algorithms: Bubble Sort, Insertion Sort, Merge Sort, Counting Sort

- Unstable Algorithms: Quick Sort, Heap Sort, Selection Sort

Understanding stability is essential for scenarios like multi-key sorting (e.g., sort by name, then age).

Recap: Time and Space Complexities

Here’s a quick reference to compare the algorithms:

| Algorithm | Best Case | Average Case | Worst Case | Space | Stable |

|---|

| Bubble Sort | O(n) | O(n²) | O(n²) | O(1) | Yes |

| Selection Sort | O(n²) | O(n²) | O(n²) | O(1) | No |

| Insertion Sort | O(n) | O(n²) | O(n²) | O(1) | Yes |

| Merge Sort | O(n log n) | O(n log n) | O(n log n) | O(n) | Yes |

| Quick Sort | O(n log n) | O(n log n) | O(n²) | O(log n) | No |

| Heap Sort | O(n log n) | O(n log n) | O(n log n) | O(1) | No |

| Counting Sort | O(n + k) | O(n + k) | O(n + k) | O(n + k) | Yes |

| Radix Sort | O(nk) | O(nk) | O(nk) | O(n + k) | Yes |

| Bucket Sort | O(n + k) | O(n + k) | O(n²) | O(n + k) | Yes |

Final Thoughts

Sorting algorithms may initially seem like dry, academic constructs, but they’re deeply embedded in the functioning of the digital world. For students pursuing a C Programming Course in Noida,diving deep into all sorting algorithmsis not just about passing exams—it’s about developing a mindset that appreciates the balance between time, space, and logic.

These algorithms teach more than sorting. They nurture problem-solving, efficiency, and algorithmic clarity. Whether you’re aiming for competitive programming, web development, or a career in data science, mastering sorting is a timeless investment. The more one experiments with these algorithms, the more intuitive they become—and the better one gets at writing clean, logical, and high-performance code.

So, take that leap. Sort some data. Explore edge cases. And let each algorithm teach you something new about the art and science of programming.