This blog will take you through the most widely used libraries, what they do, and why they are important.

Why Python for Data Science?

Before diving into the libraries, let us quickly understand why Python is the most preferred language for data science professionals:

- Ease of Use: Python has simple syntax that makes it easy to learn and apply.

- Community Support: Python has one of the largest programming communities, ensuring access to tutorials, forums, and open-source contributions.

- Extensive Libraries: Thousands of libraries exist for handling data, statistics, visualization, and machine learning.

- Integration: Python integrates smoothly with databases, cloud services, and other programming languages.

Clearly, the availability of powerful libraries is one of the strongest reasons Python is the go-to choice.

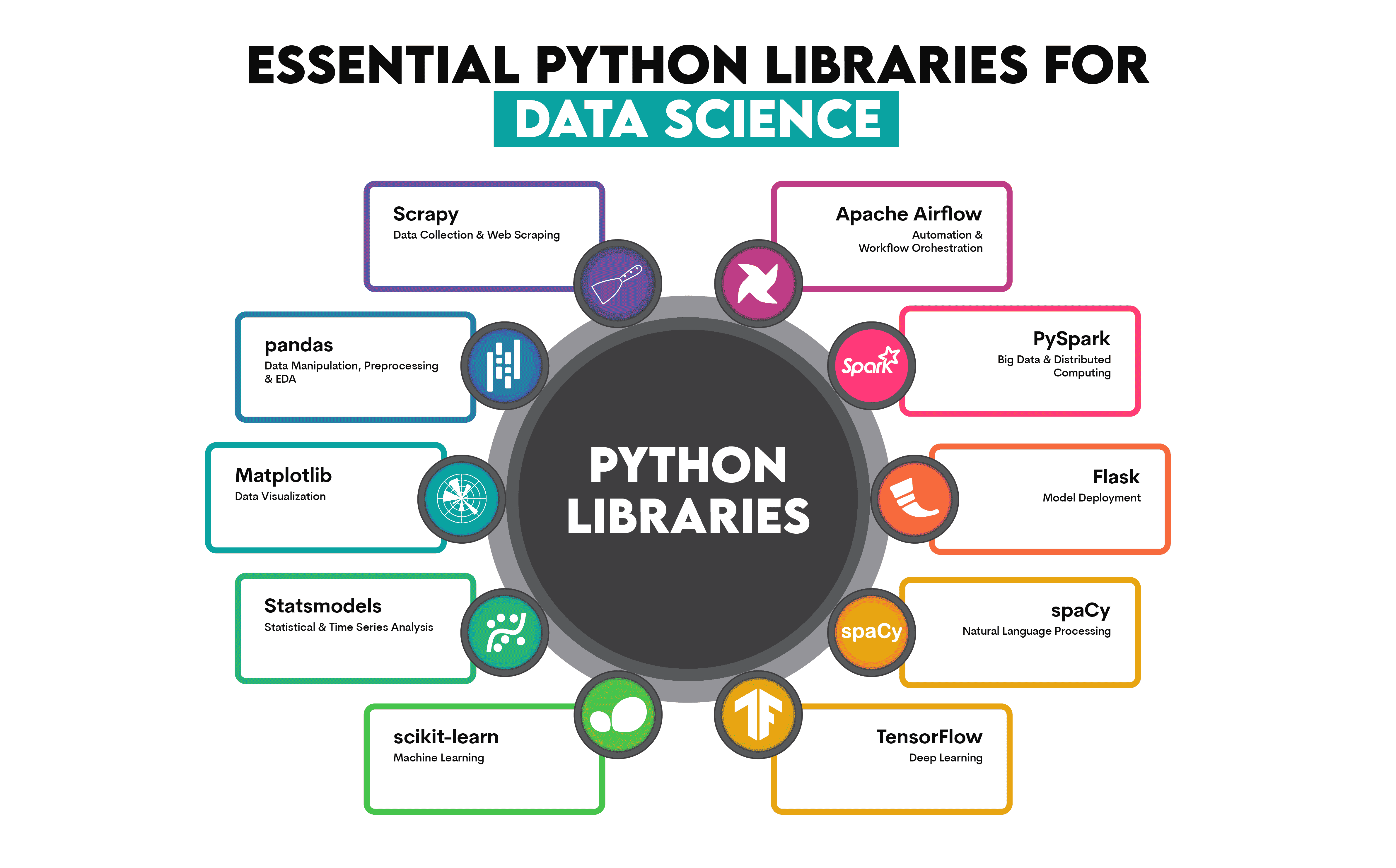

Top Python Libraries for Data Science Professionals

1. NumPy

NumPy, short for Numerical Python, is the foundation for data science in Python. It provides support for large multidimensional arrays and mathematical functions to operate on them efficiently.

Why it matters:

- Enables faster computation compared to regular Python lists.

- Essential for performing linear algebra, Fourier transforms, and other mathematical operations.

- Serves as the base for many other libraries like pandas and scikit-learn.

Use case: If you need to handle large numerical datasets or perform matrix manipulations, NumPy is your go-to library.

2. Pandas

If NumPy is the foundation, Pandas is the backbone of data manipulation. It provides two key data structures: Series for one-dimensional data and DataFrame for two-dimensional data.

Why it matters:

- Makes data cleaning, filtering, grouping, and transformation much easier.

- Allows seamless handling of missing values.

- Works smoothly with CSV, Excel, SQL databases, and more.

Use case: When you are working with tabular data, like analyzing sales records or survey responses, Pandas helps you organize and manipulate data quickly.

3. Matplotlib

Data visualization is one of the most important steps in data science, and Matplotlib is the classic library for it. It allows you to create static, animated, and interactive plots.

Why it matters:

- Offers a wide range of visualization options, from line charts and bar graphs to scatter plots and histograms.

- Highly customizable for creating professional-level graphics.

- Serves as the base for advanced visualization libraries like Seaborn.

Use case: If you need to present trends, patterns, or outliers in a dataset, Matplotlib is your first choice.

4. Seaborn

Seaborn is built on top of Matplotlib and takes visualization to the next level. It provides a high-level interface for creating attractive and informative statistical graphics.

Why it matters:

- Simplifies the process of creating complex plots.

- Provides built-in themes for beautiful visuals.

- Great for visualizing distributions and relationships between variables.

Use case: Seaborn is perfect when you need quick yet visually appealing insights into your data. For example, heatmaps to show correlations between variables.

5. Scikit-learn

Scikit-learn is the most popular machine learning library for Python. It offers simple and efficient tools for data mining and analysis.

Why it matters:

- Provides implementations of machine learning algorithms like regression, classification, clustering, and dimensionality reduction.

- Includes utilities for model evaluation, preprocessing, and feature selection.

- Works seamlessly with NumPy and Pandas.

Use case: Whether you are building a spam detection model or predicting housing prices, Scikit-learn makes machine learning accessible and efficient.

6. TensorFlow

When it comes to deep learning, TensorFlow is one of the most widely used libraries. Developed by Google, it is powerful and scalable.

Why it matters:

- Supports neural networks for image recognition, natural language processing, and more.

- Offers flexibility for research as well as production-level deployment.

- Has strong community support and integration with tools like Keras.

Use case: If you are working on advanced artificial intelligence projects like voice assistants or image classifiers, TensorFlow is indispensable.

7. Keras

Keras is a high-level neural networks API that runs on top of TensorFlow. It is user-friendly, modular, and fast.

Why it matters:

- Makes building deep learning models easy with minimal code.

- Allows quick prototyping and experimentation.

- Integrates with TensorFlow, CNTK, and Theano.

Use case: If you are a beginner in deep learning and want to build models quickly, Keras is your best friend.

8. SciPy

SciPy builds on NumPy and provides advanced functionalities for scientific and technical computing.

Why it matters:

- Offers modules for optimization, integration, interpolation, and signal processing.

- Ideal for engineers and researchers dealing with scientific data.

- Complements other libraries like NumPy and Matplotlib.

Use case: Useful in scenarios like optimizing supply chain operations or simulating physical systems.

9. Statsmodels

For professionals who rely heavily on statistics, Statsmodels is the go-to library.

Why it matters:

- Provides tools for regression, hypothesis testing, and time-series analysis.

- Complements Pandas and NumPy for in-depth statistical modeling.

- Especially useful for econometrics and academic research.

Use case: If your work involves testing hypotheses or running regression models, Statsmodels is perfect.

10. Plotly

Plotly is a modern library for interactive visualizations. Unlike static charts, it creates dynamic dashboards that can be shared online.

Why it matters:

- Offers interactivity for exploring data in real time.

- Can create dashboards and web-based data apps.

- Integrates with Jupyter notebooks and other tools.

Use case: Best for creating dashboards or interactive reports for business presentations.

11. NLTK (Natural Language Toolkit)

Text data is everywhere, from emails to social media posts. NLTK is the standard library for working with natural language processing.

Why it matters:

- Provides tools for tokenization, stemming, lemmatization, and part-of-speech tagging.

- Useful for analyzing text and building chatbots.

- Great for academic as well as practical NLP projects.

Use case: Perfect for sentiment analysis or extracting insights from large volumes of text data.

12. PyTorch

PyTorch is another leading deep learning library, developed by Facebook. It has gained popularity for its flexibility and ease of use.

Why it matters:

- Offers dynamic computation graphs, making debugging easier.

- Widely used in academic research.

- Strong support for GPU acceleration.

Use case: If you want to explore cutting-edge deep learning research or develop custom AI models, PyTorch is highly recommended.

How to Choose the Right Library

With so many libraries available, choosing the right one depends on your project needs. Here are some guidelines:

- For data manipulation: Pandas and NumPy.

- For visualization: Matplotlib, Seaborn, or Plotly.

- For machine learning: Scikit-learn.

- For deep learning: TensorFlow, Keras, or PyTorch.

- For statistics: Statsmodels.

- For natural language processing: NLTK.

Combining these libraries effectively is the key to becoming a successful data science professional.

Why a Course Can Help You Master These Libraries

While you can learn these libraries through self-study, structured learning can save time and provide real-world insights. Platforms like Uncodemy offer a Data Science with Python Course that covers these essential libraries in detail. With expert guidance, hands-on projects, and industry-level case studies, you can build confidence and become job-ready faster.

Such a course not only teaches you how to use the libraries but also when and why to use them, which is the true skill employers look for.

Conclusion

Python libraries are the secret weapons of data science professionals. From handling data with Pandas and NumPy to building intelligent models with TensorFlow and PyTorch, these libraries empower you to turn raw information into meaningful insights.

If you want to build a strong career in data science, mastering these libraries is non-negotiable. Start by practicing small projects, then move to complex real-world datasets. And if you want structured guidance, the Data Science with Python Course at Uncodemy is Noida is an excellent way to gain both technical expertise and practical experience.

The world is producing more data than ever before. With the right tools in your hands, you can be the professional who turns that data into decisions.