The Evolving Toolkit of a Data Scientist in 2026

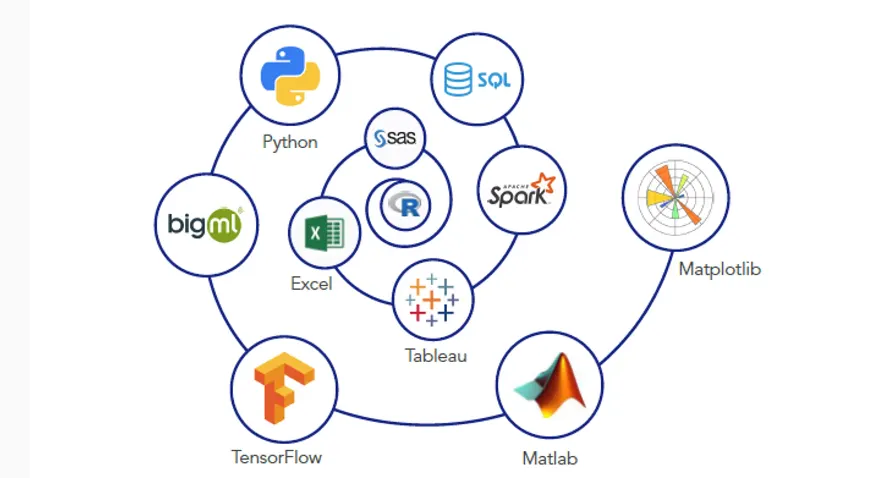

The modern Data Scientist is a versatile professional, requiring proficiency across various stages of the data lifecycle: from data collection and cleaning to analysis, modelling, visualization, and deployment. The tools reflect this multifaceted role, often integrating AI and Machine Learning (ML) capabilities to enhance efficiency and accuracy.

Core Data Science Tools

These are the foundational tools that form the backbone of almost any Data Science project:

1. Programming Languages

· Python: Remains the undisputed champion in Data Science. Its extensive ecosystem of libraries makes it incredibly versatile for data manipulation, statistical analysis, Machine Learning, and Deep Learning.

o Why learn it: Its readability, vast community support, and rich libraries (Pandas, NumPy, Scikit-learn, TensorFlow, PyTorch) make it indispensable for everything from data cleaning to building complex AI models.

· R: A powerful language primarily used for statistical computing and graphics. While Python has gained ground, R is still favoured in academia and for advanced statistical modelling.

o Why learn it: Excellent for statistical analysis, data visualization (especially with ggplot2), and reproducible research.

· SQL (Structured Query Language): The universal language for managing and querying relational databases. Every Data Scientist must be proficient in SQL to extract and manipulate data stored in databases.

o Why learn it: Essential for data extraction, filtering, aggregation, and joining data from various sources.

2. Data Manipulation & Analysis Libraries/Frameworks (Primarily Python)

· Pandas: A fundamental Python library for data manipulation and analysis. It provides data structures like Data Frames, which are highly efficient for handling tabular data.

o Why learn it: Crucial for data cleaning, transformation, merging, and preparing data for modelling.

· NumPy: The core library for numerical computing in Python, providing support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays.

o Why learn it: Underpins many other Data Science libraries and is essential for efficient numerical operations.

· Apache Spark: An open-source, distributed processing system used for big data workloads. It's designed for fast computation and can handle large-scale data processing and analytics.

o Why learn it: Necessary for working with Big Data datasets that cannot fit into a single machine's memory.

3. Data Visualization Tools

· Matplotlib & Seaborn (Python Libraries): These are the go-to libraries for creating static, interactive, and animated visualizations in Python. Matplotlib provides the basic plotting functionalities, while Seaborn offers a high-level interface for drawing attractive statistical graphics.

o Why learn them: Essential for exploratory data analysis (EDA) and communicating insights visually.

· Tableau & Power BI: Leading business intelligence (BI) tools that allow users to create interactive dashboards and reports without extensive coding. They connect to various data sources and offer drag-and-drop interfaces.

o Why learn them: Highly valued for creating professional, shareable, and interactive data dashboards for business stakeholders.

4. Machine Learning Frameworks

· Scikit-learn: A comprehensive Python library for Machine Learning. It provides a wide range of supervised and unsupervised learning algorithms, along as tools for model selection, preprocessing, and evaluation.

o Why learn it: Excellent for traditional ML tasks and serves as a great starting point for understanding ML concepts.

· TensorFlow & PyTorch: Open-source Deep Learning frameworks developed by Google and Facebook (Meta) respectively. They are used for building and training complex neural networks, especially for tasks involving images, text, and speech.

o Why learn them: Essential for advanced AI applications, including Computer Vision, Natural Language Processing (NLP), and Generative AI.

5. Big Data Technologies

· Hadoop: An open-source framework for distributed storage and processing of very large datasets across clusters of computers.

o Why learn it: Fundamental for understanding Big Data architectures, though often used in conjunction with Spark now.

· Apache Kafka: A distributed streaming platform that allows for building real-time data pipelines and streaming applications.

o Why learn it: Crucial for handling real-time data ingestion and processing, a growing trend in Data Science.

6. Cloud Platforms

· AWS (Amazon Web Services), Microsoft Azure, Google Cloud Platform (GCP): These cloud providers offer a vast array of services for Data Science and AI, including scalable computing, storage, databases, ML platforms (e.g., AWS SageMaker, Azure ML, Google Cloud Vertex AI), and Big Data services.

o Why learn them: Cloud proficiency is becoming a standard requirement for deploying and managing Data Science solutions at scale.

7. Version Control

· Git & GitHub/GitLab/Bitbucket: Git is a distributed version control system used for tracking changes in source code during software development. Platforms like GitHub host Git repositories and facilitate collaboration.

o Why learn it: Essential for collaborative Data Science projects, tracking code changes, and managing different versions of models and datasets.

8. Integrated Development Environments (IDEs) / Notebooks

· Jupyter Notebook/JupyterLab: Interactive computing environments that allow Data Scientists to combine code, visualizations, and narrative text in a single document.

o Why learn them: Widely used for exploratory data analysis, prototyping, and sharing Data Science work.

· VS Code (Visual Studio Code): A popular code editor with extensive extensions for Python, R, and Data Science, offering a powerful and customizable environment.

o Why learn it: Provides a robust environment for writing production-ready code and managing complex projects.

Emerging & Complementary Tools (AI's Influence)

The rise of AI is also bringing new categories of tools into the Data Scientist's arsenal:

· AutoML Platforms: Tools that automate parts of the Machine Learning pipeline, from feature engineering to model selection and hyperparameter tuning. Examples include Google Cloud AutoML, H2O.ai, and DataRobot.

o Why learn them: Accelerate model development and allow Data Scientists to focus on problem framing and interpretation.

· MLOps Tools: Tools and practices for deploying, monitoring, and maintaining Machine Learning models in production. Examples include MLflow, Kubeflow, and DVC.

o Why learn them: Crucial for ensuring the reliability, scalability, and ethical use of AI models in real-world applications.

· Generative AI / Large Language Models (LLMs): While not traditional Data Science tools, LLMs like ChatGPT and Gemini are increasingly used by Data Scientists for tasks like code generation, data summarization, brainstorming, and even assisting with documentation.

o Why learn them: Enhance productivity and offer new ways to interact with and derive insights from data, especially through Prompt Engineering.

Beyond the Tools: Essential Soft Skills & Methodologies

While mastering tools is vital, the most successful Data Scientists also possess strong soft skills:

· Problem-Solving: The ability to translate business problems into data-driven questions.

· Communication & Storytelling: Effectively conveying complex insights to non-technical stakeholders.

· Domain Expertise: Understanding the specific industry or business context to apply Data Science effectively.

· Ethical Reasoning: Awareness of data privacy, bias in AI, and responsible AI deployment.

Uncodemy Courses for Mastering Data Science Tools

Uncodemy offers comprehensive programs designed to equip you with the skills needed to master these essential Data Science tools:

· Data Science Courses: This flagship program provides a holistic understanding of the entire Data Science lifecycle. You'll gain hands-on proficiency in Python programming, statistics, data visualization (using Matplotlib, Seaborn, and potentially BI tools), machine learning, deep learning (with frameworks like TensorFlow/PyTorch), Natural Language Processing (NLP), and data wrangling (with Pandas, NumPy). This course is ideal for becoming a well-rounded Data Scientist.

· AI & Machine Learning Courses: These courses delve deeper into the theoretical and practical aspects of Artificial Intelligence and Machine Learning algorithms. You'll gain expertise in building, training, and deploying various AI models using frameworks like TensorFlow and PyTorch, which are critical for advanced Data Science applications.

· Python Programming Course: As Python is the lingua franca for Data Science and AI, Uncodemy's Python Programming course provides the indispensable coding skills needed to implement AI algorithms, manipulate large datasets, and build data pipelines.

· Prompt Engineering Course: This course is increasingly relevant for Data Scientists who want to leverage Large Language Models (LLMs) effectively for tasks like code generation, data summarization, and brainstorming within their Data Science projects.

Conclusion

In 2026, the toolkit for a Data Scientist is more diverse and powerful than ever. Mastering programming languages like Python and SQL, essential libraries like Pandas and NumPy, visualization tools like Matplotlib and Tableau, and Machine Learning frameworks like Scikit-learn and TensorFlow/PyTorch is fundamental. Furthermore, understanding Big Data technologies, cloud platforms, and emerging AI-driven tools like AutoML and MLOps will differentiate top professionals. By continuously learning and adapting to these advancements, and by leveraging comprehensive programs like those offered by Uncodemy, you can ensure a successful and impactful career in the dynamic field of Data Science.