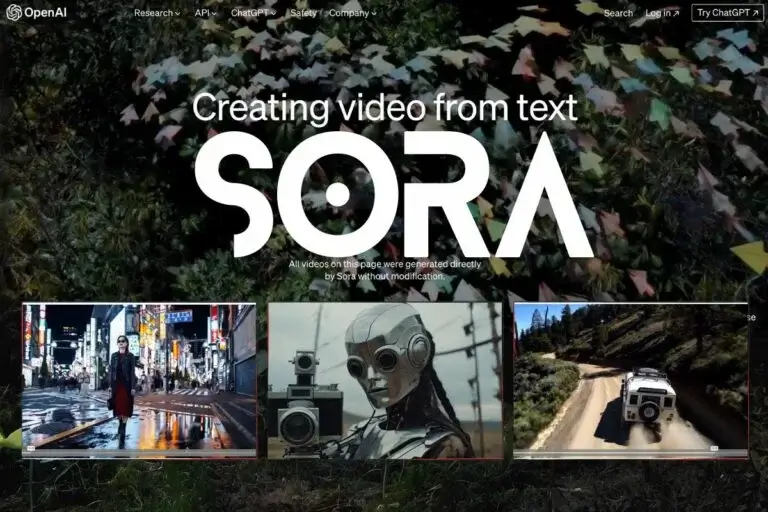

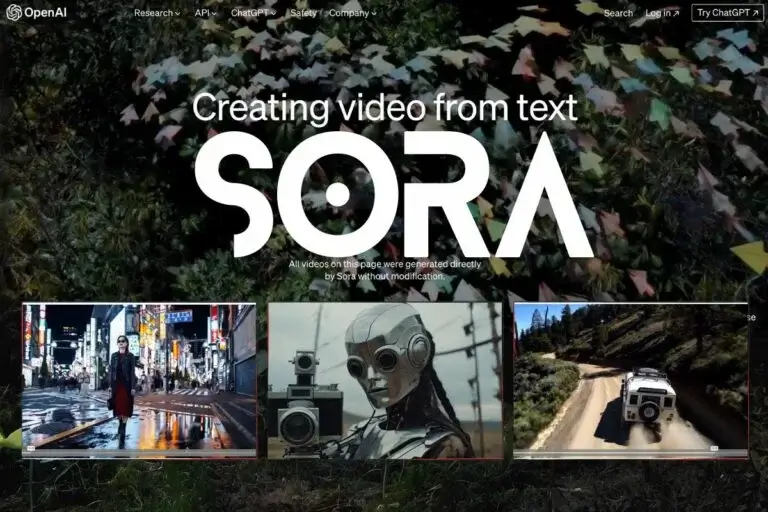

Multimodal AI Models: What They Are and Why They Matter

Understanding Sora's Core Technology

Sora operates on a foundation of diffusion models, a type of generative AI architecture that has proven particularly effective for creating high-quality visual content. The system works by starting with random noise and gradually refining it through a process that learns to reverse the noise addition process used during training. This approach allows Sora to generate videos that are not only visually appealing but also temporally consistent, meaning that objects and scenes maintain their appearance and behavior across multiple frames.

The model's architecture incorporates transformer technology, similar to what powers large language models like GPT-4, but adapted specifically for processing visual and temporal information. This combination allows Sora to understand both the spatial relationships within individual frames and the temporal relationships between frames, creating videos that flow naturally and maintain visual coherence throughout their duration.

One of the most impressive aspects of Sora's technology is its ability to understand and simulate physics. When generating a video of a ball bouncing, for example, Sora doesn't just create random motion – it demonstrates an understanding of gravity, momentum, and collision dynamics that makes the bouncing appear realistic and natural. This physics awareness extends to more complex scenarios, allowing the model to create believable interactions between multiple objects and realistic responses to environmental factors.

The training process for Sora involved analyzing vast amounts of video data to learn patterns in how objects move, how lighting changes over time, and how different elements interact within visual scenes. This extensive training enables the model to generate videos that capture subtle details like the way light reflects off surfaces, how shadows move as objects change position, and how different materials behave under various conditions.

Creative Applications and Content Generation

The creative potential of Sora has excited filmmakers, advertisers, educators, and content creators across various industries. The model can generate everything from simple product demonstrations to complex narrative sequences, opening up new possibilities for visual storytelling that were previously limited by budget, location, or technical constraints. Independent creators can now produce high-quality video content without the need for expensive equipment, large production teams, or elaborate sets.

In the advertising industry, Sora offers the potential to create compelling marketing content quickly and cost-effectively. Brands can generate product videos that showcase their offerings in various settings and scenarios without the need for physical prototypes or expensive photo shoots. This capability is particularly valuable for testing different creative concepts and messaging approaches before committing to full-scale production.

Educational content creation represents another area where Sora's capabilities could have significant impact. Complex scientific concepts, historical events, and abstract ideas can be visualized in ways that make them more accessible and engaging for learners. Teachers and educational content creators can generate custom videos that illustrate specific concepts or demonstrate processes that would be difficult or impossible to capture through traditional filming methods.

The entertainment industry is also exploring how Sora might be integrated into existing production workflows. While the technology is unlikely to replace human creativity and storytelling expertise, it could serve as a powerful tool for pre-visualization, concept development, and creating specific scenes or effects that would be challenging to produce through conventional means.

Technical Capabilities and Limitations

Sora demonstrates impressive technical capabilities that set it apart from earlier video generation models. The system can create videos up to one minute in length while maintaining consistent quality and coherence throughout the entire sequence. This extended duration capability is particularly significant because maintaining consistency across many frames presents substantial technical challenges that previous models struggled to overcome.

The model shows remarkable versatility in terms of the types of content it can generate. Sora can create videos featuring people, animals, objects, and environments, handling complex scenes with multiple interacting elements. The system can generate content in various styles, from photorealistic footage to more stylized or artistic approaches, depending on the text prompt provided.

However, like all current AI technologies, Sora has certain limitations that users should understand. The model sometimes struggles with generating text that appears within videos, often producing garbled or incorrect lettering when signs, labels, or other text elements are part of the scene. Additionally, while Sora demonstrates impressive physics understanding in many scenarios, it can occasionally produce unrealistic interactions or movements, particularly in complex scenes with many interacting elements.

The model also faces challenges with generating content involving fine motor skills or intricate hand movements, sometimes producing results where fingers or hands don't move in entirely natural ways. These limitations reflect the current state of AI technology and the inherent complexity of accurately modeling all aspects of real-world physics and human behavior.

Implications for Professional Development

The emergence of Sora and similar text-to-video AI models is creating new opportunities and challenges for professionals across various creative and technical fields. Content creators, marketers, educators, and technologists are beginning to explore how these tools can enhance their work and create new possibilities for visual communication and storytelling.

For professionals looking to stay current with these rapidly evolving technologies, understanding the capabilities and applications of AI-powered video generation is becoming increasingly important. This includes learning how to craft effective prompts that generate desired results, understanding the technical limitations and ethical considerations of AI-generated content, and developing workflows that integrate these tools with existing creative processes.

The field of AI and machine learning continues to evolve rapidly, with new models and capabilities emerging regularly. For those interested in developing expertise in this area, comprehensive programs like the [Deep Learning] course in Noida provide structured learning opportunities that cover both the theoretical foundations and practical applications of advanced AI technologies, preparing learners for careers in this dynamic field.

Ethical Considerations and Responsible Use

The powerful capabilities of Sora raise important questions about the responsible use of AI-generated video content. The potential for creating realistic videos that depict events that never occurred or people saying things they never said presents significant challenges for information verification and media literacy. These concerns are particularly relevant in an era where misinformation and deepfakes are already problematic issues.

OpenAI has acknowledged these concerns and has implemented various safety measures and usage guidelines for Sora. The company has restricted access to the model during its initial release phase, limiting usage to researchers, creative professionals, and other stakeholders who can help identify potential risks and develop best practices for responsible deployment.

The development of detection tools and watermarking systems that can identify AI-generated content is an active area of research that will be crucial for maintaining trust in digital media. As Sora and similar technologies become more widely available, the importance of digital literacy and critical evaluation of video content will continue to grow.

Content creators and organizations using AI-generated video must also consider questions of authenticity, disclosure, and intellectual property. Establishing clear guidelines about when and how AI-generated content should be labeled, and ensuring that such content doesn't infringe on existing copyrights or misrepresent real people or events, will be essential for responsible adoption of these technologies.

The Future of AI Video Generation

Sora represents a significant milestone in AI video generation, but it's likely just the beginning of what's possible in this field. Future developments may include even longer video generation capabilities, higher resolution outputs, better physics simulation, and improved handling of complex scenes with multiple interacting elements. Integration with other AI technologies, such as audio generation and natural language processing, could enable the creation of complete multimedia experiences from simple text descriptions.

The democratization of video creation through AI tools like Sora has the potential to fundamentally change how visual content is produced and consumed. As these technologies become more accessible and user-friendly, we may see an explosion of creative content from individuals and organizations that previously lacked the resources or technical expertise to produce high-quality videos.

Research and development in this field continue to advance rapidly, with improvements in model efficiency, output quality, and user control being active areas of focus. Future versions of text-to-video AI models may offer more precise control over specific aspects of generated content, allowing creators to fine-tune elements like lighting, camera angles, and character actions with greater precision.

Conclusion: Embracing the Video Generation Revolution

Sora represents a transformative advancement in AI technology that is reshaping our understanding of what's possible in automated content creation. Its ability to generate high-quality, physics-aware videos from simple text descriptions opens up new possibilities for creativity, education, and communication that were previously unimaginable. While the technology has limitations and raises important ethical considerations that must be carefully addressed, its potential to democratize video creation and enhance human creativity is undeniable.

As Sora and similar technologies continue to evolve and become more widely available, individuals and organizations across various industries will need to adapt to this new reality. This includes developing new skills for working with AI-generated content, establishing ethical guidelines for responsible use, and exploring creative applications that leverage these powerful capabilities while maintaining authenticity and trust.

The future of video creation is being written today, and Sora is playing a leading role in that story. By understanding its capabilities, limitations, and implications, we can better prepare for a future where the boundary between human and AI creativity becomes increasingly fluid, opening up new possibilities for visual storytelling and communication that benefit creators and audiences alike.