Top 5 Lightweight AI Models for Mobile Applications: Revolutionizing On-Device Intelligence

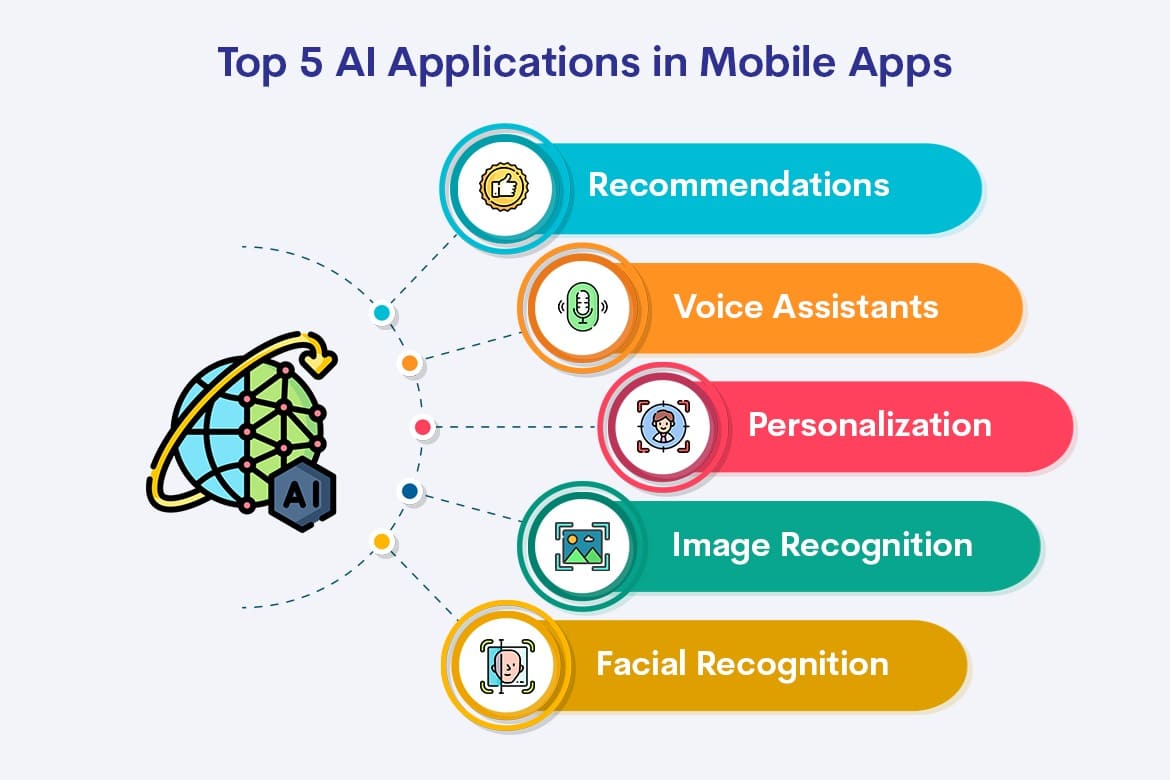

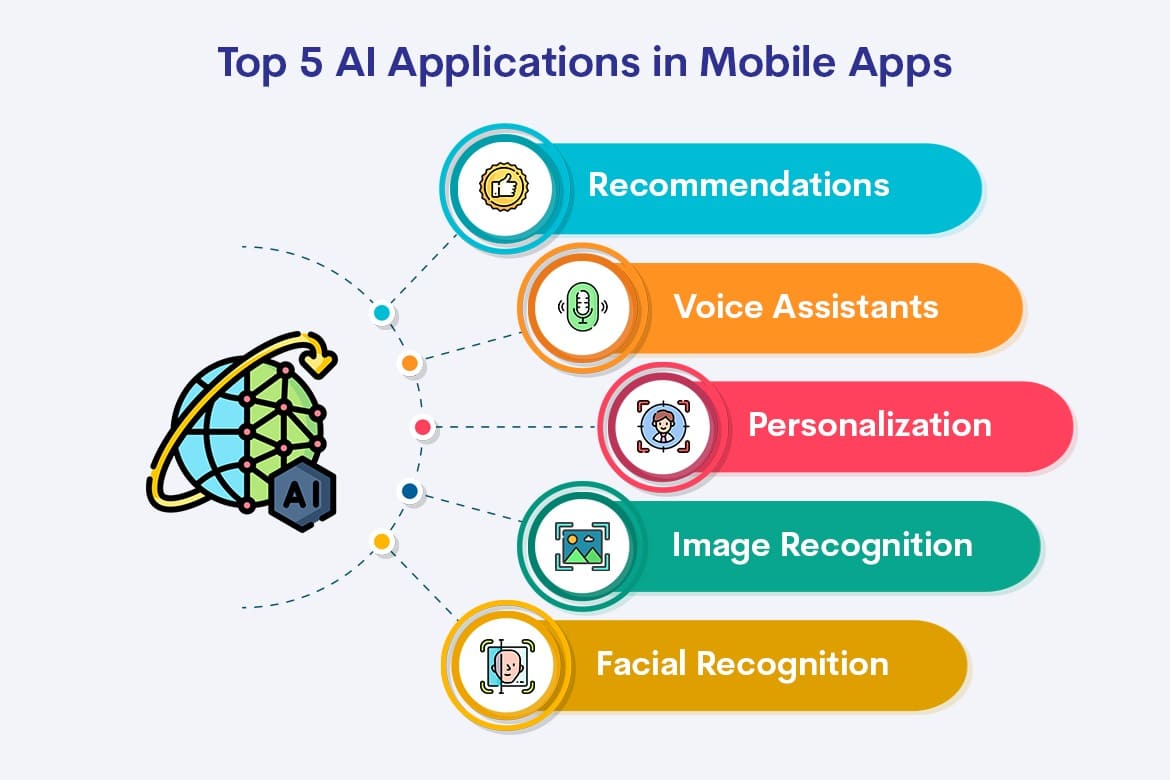

The evolution of mobile AI has been driven by the recognition that not all intelligent features require constant internet connectivity or cloud-based processing. Users expect their devices to respond instantly, work offline, and maintain privacy by processing sensitive data locally. This has led to the development of highly optimized AI models that can run efficiently on mobile processors while delivering impressive performance across various applications, from image recognition and natural language processing to predictive text and voice assistance.

Understanding lightweight AI models becomes crucial for developers, businesses, and technology enthusiasts who want to create mobile applications that truly leverage the power of artificial intelligence. These models represent years of research and optimization, combining advanced machine learning techniques with practical engineering solutions to overcome the inherent limitations of mobile computing environments.

MobileBERT: Revolutionizing Natural Language Processing on Mobile

MobileBERT stands as one of the most significant breakthroughs in mobile natural language processing, offering a compressed version of the powerful BERT model specifically designed for mobile deployment. This model demonstrates how sophisticated language understanding can be achieved within the constraints of mobile hardware without sacrificing too much accuracy or functionality.

The genius of MobileBERT lies in its architectural optimizations that reduce model size while maintaining the core capabilities that make BERT so effective. Through techniques such as knowledge distillation and layer reduction, MobileBERT achieves a model size that is significantly smaller than its full-sized counterpart while retaining much of its language understanding capabilities. This makes it ideal for applications such as smart keyboards, text classification, sentiment analysis, and even basic chatbot functionality directly on mobile devices.

What makes MobileBERT particularly appealing for mobile developers is its versatility across different natural language processing tasks. The model can handle text classification, named entity recognition, and question answering with remarkable efficiency. Mobile applications incorporating MobileBERT can provide intelligent text processing features that work seamlessly offline, ensuring consistent user experiences regardless of network connectivity. The model's ability to understand context and nuance in human language makes it invaluable for creating more intuitive and responsive mobile interfaces.

The practical applications of MobileBERT extend beyond simple text processing. Email applications can use it for intelligent categorization and priority detection, while social media apps can implement real-time content moderation and sentiment analysis. Note-taking applications can leverage its capabilities for automatic summarization and content organization, demonstrating how lightweight AI can enhance productivity tools without requiring constant cloud connectivity.

MobileNet: Pioneering Efficient Computer Vision

MobileNet has established itself as the gold standard for mobile computer vision applications, offering a perfect balance between model size, computational efficiency, and visual recognition accuracy. This model family represents a fundamental shift in how computer vision models are designed, prioritizing efficiency and mobile deployment from the ground up rather than simply compressing existing large models.

The architecture of MobileNet employs depthwise separable convolutions, a technique that dramatically reduces the number of parameters and computational requirements while maintaining strong performance in image classification and object detection tasks. This innovative approach allows mobile devices to perform sophisticated visual analysis in real-time, opening up possibilities for augmented reality applications, intelligent camera features, and automated image organization systems.

The versatility of MobileNet makes it suitable for a wide range of mobile applications. Camera apps can implement real-time object recognition and scene understanding, while shopping applications can offer visual search capabilities that allow users to find products by simply taking photos. Social media platforms can use MobileNet for automatic image tagging and content categorization, enhancing user experience while reducing manual effort.

Recent versions of MobileNet have pushed the boundaries even further, incorporating techniques such as neural architecture search to optimize the model structure for specific mobile hardware configurations. This evolution demonstrates the ongoing commitment to making computer vision accessible and practical for mobile applications across various devices and performance tiers.

For students and professionals interested in understanding and implementing these technologies, comprehensive education becomes essential. Programs such as the Deep Learning course in Noida provide valuable insights into model optimization techniques, mobile deployment strategies, and the practical considerations involved in adapting AI models for resource-constrained environments.

SqueezeNet: Maximizing Efficiency in Image Classification

SqueezeNet represents a revolutionary approach to image classification that prioritizes extreme efficiency without compromising accuracy. This model achieves comparable performance to much larger networks while requiring significantly less storage space and computational resources, making it an ideal choice for mobile applications where every byte and every processing cycle matters.

The key innovation in SqueezeNet lies in its use of fire modules, which combine squeeze and expand layers to reduce the number of parameters while maintaining model expressiveness. This architectural approach allows SqueezeNet to achieve impressive image classification accuracy with a model size that is often 50 times smaller than comparable alternatives. For mobile developers, this translates to faster app downloads, reduced storage requirements, and improved battery life.

The practical benefits of SqueezeNet extend beyond just technical specifications. Mobile applications can implement sophisticated image recognition features without worrying about storage constraints or slow loading times. Photo gallery apps can use SqueezeNet for automatic image categorization and search functionality, while productivity apps can incorporate document scanning and text recognition features that work entirely offline.

SqueezeNet's efficiency makes it particularly valuable for applications that need to process multiple images quickly or operate in resource-constrained environments. Security applications can use it for real-time facial recognition and access control, while educational apps can implement interactive visual learning tools that respond immediately to user inputs. The model's small size also makes it suitable for embedding in IoT devices and edge computing scenarios where traditional large models would be impractical.

TensorFlow Lite: Democratizing Mobile AI Development

TensorFlow Lite has emerged as a comprehensive framework rather than a single model, providing tools and optimizations that make it easier to deploy various AI models on mobile devices. This platform represents Google's commitment to making mobile AI development accessible to developers regardless of their machine learning expertise, offering pre-trained models and optimization tools that simplify the deployment process.

The strength of TensorFlow Lite lies in its ecosystem of tools and pre-optimized models that cover a wide range of common mobile AI use cases. From image classification and object detection to text classification and pose estimation, TensorFlow Lite provides ready-to-use solutions that developers can integrate into their applications with minimal effort. The framework also includes optimization techniques such as quantization and pruning that can further reduce model size and improve performance.

What sets TensorFlow Lite apart is its focus on practical deployment considerations. The framework provides tools for converting existing TensorFlow models to mobile-optimized formats, handles hardware acceleration where available, and offers debugging and profiling tools that help developers optimize their implementations. This comprehensive approach makes it easier for development teams to incorporate AI features into their mobile applications without requiring deep expertise in machine learning optimization.

The versatility of TensorFlow Lite enables developers to create sophisticated mobile applications that leverage multiple AI capabilities simultaneously. Fitness apps can combine pose estimation with activity recognition, while language learning apps can integrate speech recognition with text analysis. The framework's modular approach allows developers to mix and match different AI capabilities based on their specific application requirements.

YOLO Nano: Real-Time Object Detection for Mobile

YOLO Nano represents the culmination of efforts to bring real-time object detection to mobile devices, offering a dramatically compressed version of the popular YOLO object detection model. This achievement demonstrates how sophisticated computer vision capabilities can be adapted for mobile deployment without sacrificing the real-time performance that makes YOLO so valuable for interactive applications.

The development of YOLO Nano involved careful optimization of the original YOLO architecture, using techniques such as channel shuffling, ghost modules, and attention mechanisms to maintain detection accuracy while reducing computational requirements. The result is a model that can detect and classify multiple objects in real-time on mobile devices, opening up new possibilities for augmented reality, autonomous vehicle applications, and interactive gaming experiences.

The practical applications of YOLO Nano extend across numerous mobile application categories. Retail apps can implement visual search and product recognition features that work in real-time, while safety applications can detect and alert users to potential hazards in their environment. Educational apps can create interactive learning experiences that respond to objects in the real world, while productivity apps can automate document processing and information extraction tasks.

What makes YOLO Nano particularly impressive is its ability to maintain the speed and accuracy that made the original YOLO model so popular while operating within the constraints of mobile hardware. This achievement required innovative approaches to model compression and optimization that have influenced the broader field of mobile AI development.

Implementation Considerations and Best Practices

Successfully implementing lightweight AI models in mobile applications requires careful consideration of various factors beyond just model selection. Device compatibility represents a crucial concern, as different mobile processors and operating systems may have varying levels of support for AI acceleration and optimization. Developers must consider how their chosen models will perform across different device tiers and plan for graceful degradation on older or less powerful hardware.

Battery life optimization becomes particularly important when implementing AI features in mobile applications. While lightweight models are designed to be efficient, continuous AI processing can still impact battery performance. Successful implementations often involve intelligent scheduling of AI tasks, using techniques such as adaptive processing rates and strategic caching to minimize power consumption while maintaining responsive user experiences.

Memory management presents another critical consideration for mobile AI applications. Even lightweight models require careful memory allocation and cleanup to prevent performance degradation and crashes. Developers must implement efficient loading and unloading strategies, particularly for applications that use multiple AI models or process large amounts of data.

The integration of AI features into mobile user interfaces requires thoughtful design that makes AI capabilities feel natural and intuitive rather than gimmicky or overwhelming. The most successful mobile AI applications are those where artificial intelligence enhances existing functionality rather than calling attention to itself as a separate feature.

Future Trends and Emerging Opportunities

The landscape of mobile AI continues to evolve rapidly, with new optimization techniques and model architectures emerging regularly. Neural architecture search is becoming increasingly important for creating models that are specifically optimized for mobile deployment, while federated learning approaches are enabling new possibilities for privacy-preserving AI features that learn from user behavior without compromising personal data.

Edge AI deployment is becoming more sophisticated, with mobile devices increasingly capable of running multiple AI models simultaneously and coordinating their outputs for more complex intelligent behaviors. This trend is enabling new categories of applications that combine multiple AI capabilities in ways that were previously impractical for mobile deployment.

The democratization of AI development tools is making it easier for mobile developers to experiment with and implement AI features without requiring extensive machine learning expertise. This trend is likely to accelerate innovation in mobile AI applications as more developers gain access to powerful yet accessible AI development platforms.

Hardware improvements in mobile processors are also creating new opportunities for more sophisticated AI applications. Dedicated AI accelerators and improved memory architectures are enabling mobile devices to run larger and more capable models while maintaining efficiency and battery life.

Conclusion

The development of lightweight AI models for mobile applications represents one of the most exciting frontiers in artificial intelligence, combining cutting-edge research with practical engineering to create solutions that enhance daily life through intelligent mobile experiences. These models demonstrate that sophisticated AI capabilities need not be limited to cloud-based services or high-end computing hardware, but can be made accessible and practical for everyday mobile applications.

The five models discussed in this article represent different approaches to solving the challenge of mobile AI deployment, each with its own strengths and ideal use cases. MobileBERT brings natural language understanding to mobile devices, while MobileNet and SqueezeNet enable sophisticated computer vision capabilities. TensorFlow Lite provides a comprehensive development framework, and YOLO Nano delivers real-time object detection performance that was previously impossible on mobile hardware.

The success of these lightweight models points toward a future where AI becomes increasingly integrated into mobile experiences, creating applications that are more intelligent, responsive, and capable of operating independently of cloud services. As these technologies continue to evolve and improve, developers and businesses that understand and leverage them effectively will be well-positioned to create the next generation of innovative mobile applications that truly harness the power of artificial intelligence.