By 2026, the world of data science will have gone through tremendous change. As data volume is growing and the demand to have more intelligent and faster solutions is becoming a reality, a new generation of AI tools has arisen. These tools are cheaper and more adaptable, and specific to the unique challenges encountered in different industries. Due to the rise in machine learning, cloud computing, and natural language processing, most of this tooling needs minimal-to-no knowledge of coding. Others have gotten more efficient at automating repetitive tasks and enhancing the accuracy of their models.

The advancement of generative AI, explainable AI and AutoML (Automated Machine Learning) has also led to the use of such technologies in both small startups and large organisations. The increase of user-friendly platforms and data science democratisation is one of the most apparent trends of 2026. The tools that have usually involved extensive programming knowledge are being developed with more user-friendly interfaces and experienced workflows.

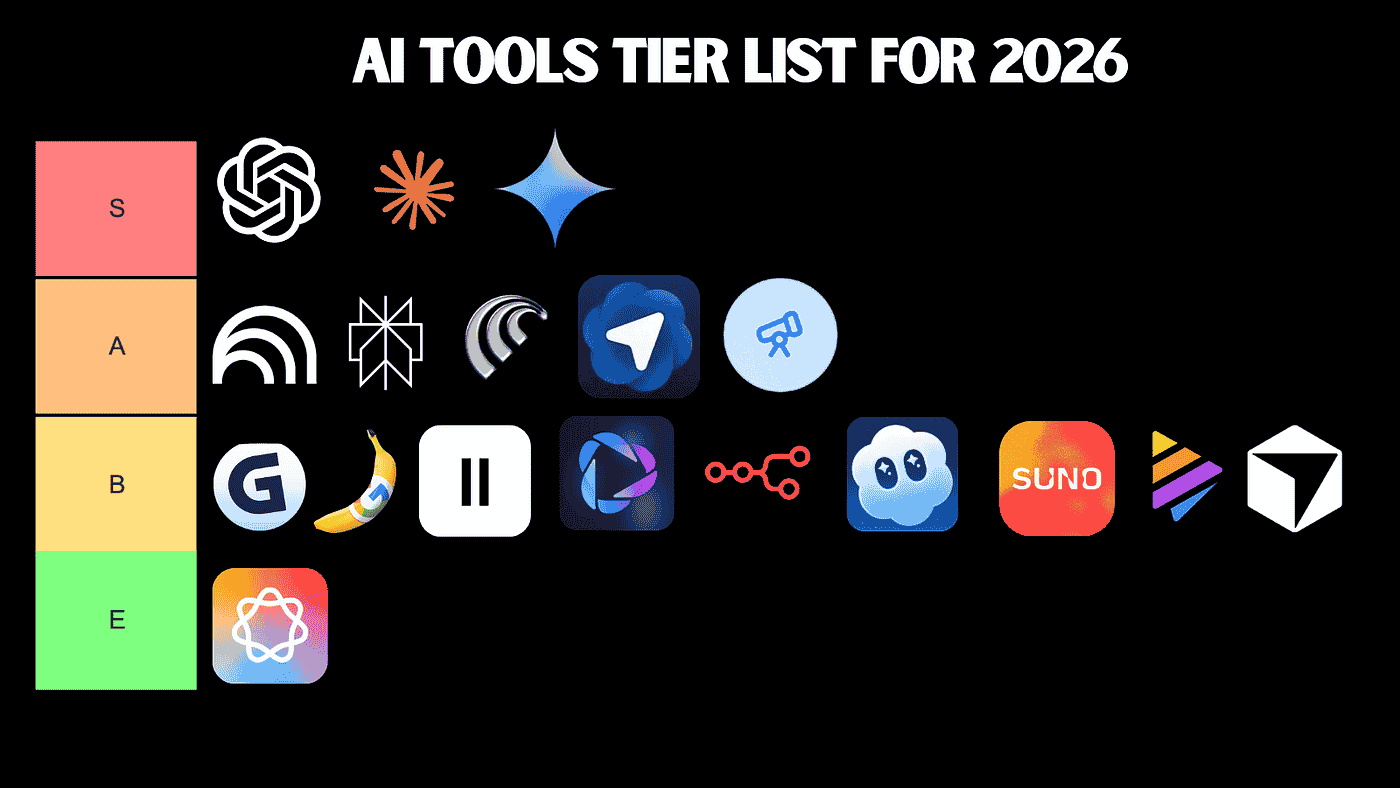

The purpose of this blog is to introduce the reader to a curated list of the best AI tools that will be in use towards data science projects in 2026. It embraces a variety of categories such as model development, automation, data cleaning, visualisation, and deployment. All listed tools are chosen according to their relevance, popularity, and value in various spheres. Are you a student starting out in data science? This guide will assist you in seeing which tools are having a meaningful impact and why.

Why AI Tools Matter in Data Science in 2026

With the rising demand for AI tools, many professionals are upskilling through online platforms and local programs like a data science course in Noida to stay competitive in 2026. By 2026, AI tools will have become increasingly pivotal in data science. Strategies to address the data explosion, making faster, smarter decisions with an explosion of data, and machine learning approaches over 80 years old all too frequently fail to meet modern demands. AI tools are no longer just for building models, as they assist with all parts of a data science project, including data cleaning, deployment, and monitoring. The transition of manual processes that were historically labour-intensive to automated systems that are capable of learning, adaptation, and optimisation has been one of the most significant changes in recent years. These more intelligent workflows enable data scientists to focus more on strategy and less on tedious tasks.

The other key factor that contributes to the trend towards dependency on AI tools is the rising expectations of scalability, efficiency, and explainability. Organisations no longer have to contend with gigabytes of data; some are juggling terabytes or even petabytes of information. Such a scale needs to be handled using a tool capable of analysing and processing data in real-time, but with accuracy and dependability. Meanwhile, explainable AI has been gaining traction, particularly in fields such as finance, healthcare, and governance, where the rationale behind a model making a specific decision can be equally as significant as the decision itself. New AI tools are provided with functions that assist users in understanding model behaviour, detecting biases, and developing trust in the AI-driven insights.

The data science landscape has also shifted with the emergence of low-code and no-code platforms. Previously, it was commonly needed to have a thorough knowledge of programming and have expertise in complex AI development frameworks to build effective AI models. However, in 2026, platforms will simply enable individuals with little coding knowledge to develop strong AI solutions. These tools democratize data science access, empowering domain expert users, such as marketers, product managers, and business analysts, to have direct input in model building. This expedites innovation not only but also eliminating the gap between technical teams and business users.

Real-time processing and the use of cloud integration have further increased the relevance of AI tools in data science. Since an increasing number of businesses are relocating their operations to the cloud, tools that are either cloud-native or can be easily deployed in the cloud have become a necessity. These tools enable uninterrupted access to data, integrated work processes, and scaling efforts of model implementation. In special fields such as fraud detection, predictive maintenance, and personalised recommendations, especially real-time data systems are increasingly becoming prevalent. Artificial intelligence apps used to process streaming data see to it that organisations to take action on real-time insights, instead of receiving batch-processed reports.

Key Categories of AI Tools in Data Science

A broad scope of tools based on AI has made the field of data science in 2026 more efficient and accessible. These tools can be grouped into a number of categories depending on their basic functionality, but all serve a different purpose to support data scientists in creating smarter, faster, and more accurate solutions.

AutoML platforms have particularly gained popularity in saving time and effort in building models. Software, such as Google Cloud AutoML, enables users to create quality models with little code, relying on Google's data-intensive infrastructure. Another popular industry and academic choice is H2O.ai, because it is open-source and highly supportive of machine learning automation. DataRobot is particularly remarkable due to its enterprise AutoML offerings, which provide full automation of the entire process from data ingestion through deployment into production systems as well as robust interpretability capabilities.

Among people who prefer to build models manually, packages such as PyTorch and TensorFlow remain at the forefront of model-building and deployment tools. People love PyTorch as it is highly flexible and easy to use, particularly during research and deep learning activities. Google supports TensorFlow, which has advanced tools to support model development and scaling. Hugging Face Transformers has become incredibly popular because it makes working with pre-trained language models easier. It has an open-source library that covers a large variety of NLP operations and is compatible with PyTorch and TensorFlow.

Clean data is a prerequisite to modelling. Messy, unstructured datasets can be handled through data cleaning and preprocessing tools. Trifacta is also characterised by an intuitive user interface that simplifies data wrangling even for non-technical users. Dataprep by Google is an application using the Trifacta platform that can integrate easily with other Google products and run at scale in the cloud. Though more outdated, OpenRefine remains popular in 2026 due to its visibility and data-cleaning power; it is often utilised in smaller or academic projects.

Visualisation and explainable tools are also essential to data scientists nowadays. Tableau, which has now had AI capabilities added to it, assists the user in creating insights in dynamic and smart dashboards. Power BI and Copilot specifically help break down the process of data analysis, allowing it to be more intuitive since it permits users to converse with their data in natural language. To explain the model, commonly adopted tools are SHAP and LIME to interpret the black-box models. Existing explainable AI (XAI) tools will become newer in 2026 that providing real-time explanations.

In the domain of workflow orchestration and MLOps, such tools of workflow orchestration and MLOps as MLflow are crucial to facilitate machine learning experiments, their tracking, and model sharing. Kubeflow is a framework that runs on Kubernetes to enable the scaling and deployment of machine learning workflows at production scale. Amazon SageMaker Studio provides an entire ecosystem of tools to develop, label, train, tune, and deploy ML in a single location.

Finally, the emergence of low-code and no-code AI products has brought data science to a broader audience. The drag-and-drop interface allows rapid experimentation and deployment because Microsoft Azure ML Studio has a drag-and-drop interface. Another small platform named Akkio targets business people who need to create models but without code.

Emerging AI Tools in 2026

As of 2026, many AI tools have made their way into the data science environment, bringing with them new functions that are rapidly gaining momentum. One of those tools is SynthMind AI, a framework that enables sophisticated synthetic data production. SynthMind enables data scientists to make high-quality, realistic data that is more statistically accurate and guarantees anonymity.

The other emerging player is NeuroPilot Studio, a no-code deep learning workflow that makes neural network development accessible to non-experts. It provides drag-and-drop capabilities to create, train and deploy advanced models without typing even one character of the code. It supports real-time collaboration and is therefore suitable for team projects and classroom learning, which makes AI development more democratic.

Not to be overlooked is ExplainEase, an application created to enhance the capabilities of model interpretability. With the growing significance of explainable AI in high-stakes decision-making, ExplainEase provides visual and interactive explanations of black-box as well as white-box models. It can be easily incorporated into other ML frameworks such as TensorFlow and PyTorch and has compliance reporting capabilities to support regulatory requirements.

How to Choose the Right Tool

When choosing the appropriate AI tool to perform a data science task in the year 2026, there are a number of factors that should be taken into account. The type of the project is a key factor. As an example, a basic classification task might only require a lightweight AutoML framework, whereas a more sophisticated natural language processing project might be better suited to deep learning frameworks such as Hugging Face or TensorFlow.

The amount and nature of data matter just as well. Apache Spark or Dataiku are more appropriate when analysing large-scale distributed data, but small data can be easily managed with minor tools, such as scikit-learn or H2O.ai. Certain tools specialise in either structured or unstructured data as well, so it is important to learn about your data before selecting a platform.

Another important variable is the team skillset. A team that is highly technical and has experience in coding might choose open-source libraries because they have flexibility and control. Conversely, teams that lack extensive experience in programming may be more attracted to low-code or no-code solutions, including Microsoft Azure ML Studio or Akkio, where the interface is easy to use.

The decision is frequently affected by budget constraints as well. Although tools such as PyTorch or MLflow open-source are freely available and completely customizable, they can be more time-consuming and knowledge-demanding to work with. Commercial tools also tend to have good customer support and be feature-rich, but may prove costly, especially to startups or small teams.

It is also important to know whether the tool is cloud-based or on-premise. The scalability, distributed coordination, and easy access to massive compute resources provided by cloud platforms make them suitable for dynamic teams. But some of the more regulated industries might need the control and security of an on-premise solution.

Real-World Applications & Use Cases

AI tools have been integrated into practical data science initiatives in various industries that enable professionals to work smarter, faster, and more flawlessly. Needs of each domain are distinct, and the selection of AI tools is commonly dictated by data complexity, the urgency of predictions, and the automation degree required.

AI in healthcare is being applied to identify diseases, treat them on a more individualised level, and handle patient information more effectively. To give an example, TensorFlow and PyTorch can be used to develop deep learning networks, performing the analysis of medical images such as X-rays or MRIs. The models can help physicians diagnose diseases like cancer or pneumonia with high levels of accuracy. H2O.ai and Google Cloud AutoML are also popular in healthcare initiatives due to their ability to automate the machine learning process, enabling even smaller hospitals with scarce resources to develop predictive models. In addition, explainability also plays an essential role in healthcare, and tools such as SHAP and LIME can aid medical professionals in grasping how a model makes its predictions, leading to better trust and transparency.

Speed, accuracy, and risk management are the top priorities in the finance sector. DataRobot and Amazon SageMaker are platforms that data scientists may use to handle fraud detection or credit scoring. These tools assist in rapid model development, scalability, and real-time development. MLflow is also used by finance teams to track experiments and model versioning, which ensures that any change can be traced. Visualisation tools such as Tableau and Power BI, now augmented with AI capabilities, show insights in a clear way to decision-makers. In the case of fraud detection, AI models need to be fast enough to respond to emerging threats. Layers that assist in ongoing learning and tracking, like Kubeflow or SageMaker Studio, are useful in this regard.

AI tools have also been adapted to marketing and e-commerce to get insights on customer behaviour and enhance targeting and conversion. The recent popularity of low-code platforms, such as Akkio and Obviously.AI, has been driven by enabling marketers who are not coders to build strong predictive systems. They also make it easier to determine which customers are more likely to churn, which products will sell more in a sale, and which marketing campaigns will work best. Hugging Face Transformers are increasingly applied to natural language processing tasks, including sentiment analysis, product review classification and chatbot development. These tools are being used by companies to interpret scaled customer feedback, with responses adjusting accordingly. The data visualisation remains an extremely important aspect of marketing, and Power BI AI Copilot allows marketers to develop interactive dashboards that can provide automatically generated insights.

AI is being actively used in the context of climate analysis and environmental monitoring by helping to track changes, forecast extreme events, and assist in sustainable development. Satellite imagery is used to detect deforestation, melting glaciers, or rising sea levels with the help of tools such as Google Earth Engine, combined with TensorFlow or PyTorch. In computationally intensive operations, such as climate modelling, researchers employ highly performant orchestration tools like Kubeflow to coordinate pipelines and workflows with large data. The trend of air quality and weather patterns is predicted using AutoML tools such as H2O.ai. Such insights can be of benefit not only to scientists but also to governments and NGOs involved with climate policy and disaster preparedness.

In each of these areas, explainability tools such as SHAP and LIME remain relevant, particularly in cases where decisions are made in the areas of life, money, or the environment. They assist stakeholders in knowing how models operate, what inputs are mostly significant, as well as whether there is any bias or not. The tools of AI are no longer restricted to data scientists, but are instead applied by medical professionals, financial experts, marketers, and those studying the environment, transforming data science into a more cooperative process than ever before.

Conclusion

By 2026, AI systems will be an indispensable part of data science, used to run everything from healthcare diagnostics, climate modelling and beyond. No longer reserved for technicians, AI is now ready to become accessible to non-technical users with user-friendly platforms, and most organisations have an opportunity to start using it today.

In order to remain relevant, professionals and students are advised to continue experimenting with new tools. Free trials, open-source platforms, and learning hubs such as GitHub, Kaggle, Coursera, and DataCamp provide immense possibilities to develop skills. There is also helpful information in online communities on Reddit, LinkedIn, and Discord.

As the AI landscape continues to evolve, those who stay curious, keep learning, and adapt quickly will lead the way in solving real-world problems through data-driven innovation.