For anyone enrolled in an AI Course in Noida, understanding the most important clustering algorithms is not just optional — it is fundamental. Clustering techniques allow AI systems to uncover hidden patterns in datasets, make sense of unstructured data, and improve the performance of predictive models. This article provides a detailed, student-friendly introduction to the top clustering algorithms you should know if you want to master machine learning.

We will explore the concepts behind each algorithm, explain their strengths and limitations, and discuss their applications in real-world scenarios. By the end of this guide, you will have a solid understanding of key clustering techniques and their role in AI.

What is Clustering in Machine Learning?

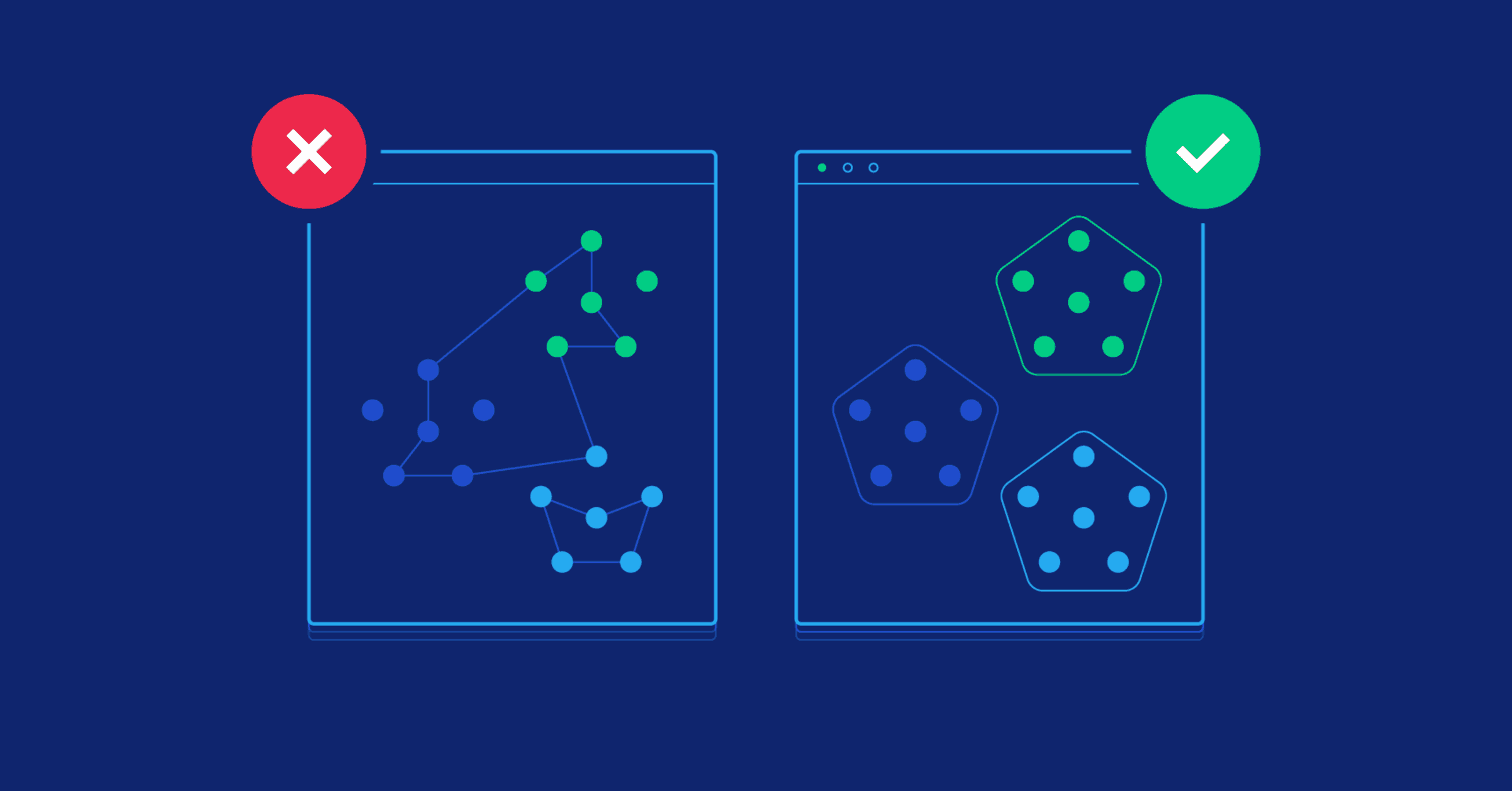

Clustering refers to the process of dividing a dataset into groups, or clusters, such that data points within the same cluster are more similar to each other than to those in other clusters. Unlike supervised learning, where models are trained on labeled data, clustering works without predefined categories. Instead, it uses similarity or distance measures to find natural groupings in the data.

The importance of clustering cannot be overstated. Whether you are working on market research, image recognition, anomaly detection, or bioinformatics, clustering provides a way to explore patterns and structure in large, complex datasets. In an AI Course in Noida, you will encounter clustering techniques as part of machine learning fundamentals, learning how to apply them using Python, R, or specialized machine learning libraries.

Let us now dive into the top clustering algorithms that every AI learner should know.

K-Means Clustering

Perhaps the most popular and widely used clustering algorithm is K-Means. It is a centroid-based algorithm that divides the dataset into k clusters, where k is a user-specified number. The algorithm works by initializing k centroids (typically at random), assigning each data point to the nearest centroid, and then recalculating the centroids based on the new cluster assignments. This process repeats until the centroids no longer move significantly.

K-Means is loved for its simplicity, speed, and scalability. It works well when the clusters are spherical and roughly equal in size. However, it has limitations: it requires the user to predefine the number of clusters, it can get stuck in local minima, and it does not perform well with non-convex or irregularly shaped clusters.

In practice, K-Means is used for customer segmentation, document classification, image compression, and more. For students in an AI Course in Noida, K-Means provides an accessible entry point into clustering, offering both hands-on coding exercises and conceptual learning opportunities.

Hierarchical Clustering

Another important technique is hierarchical clustering, which builds a hierarchy or tree-like structure of clusters. There are two main approaches: agglomerative (bottom-up) and divisive (top-down). Agglomerative clustering starts with each data point as its own cluster and progressively merges the closest clusters, while divisive clustering starts with all data points in one cluster and splits them recursively.

The result is a dendrogram, a tree diagram that shows how clusters are combined or divided at each step. Unlike K-Means, hierarchical clustering does not require you to predefine the number of clusters — you can decide later by cutting the dendrogram at a certain level.

Hierarchical clustering is particularly useful when you want to explore the nested relationships between data points or visualize the clustering process. Applications include gene expression analysis, document clustering, and social network analysis. Students taking an AI Course in Noida will often experiment with hierarchical clustering using tools like SciPy or scikit-learn, learning how to interpret dendrograms and tune distance metrics.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise)

While K-Means and hierarchical clustering work well with compact, well-separated clusters, they struggle with datasets that have irregular shapes or noise. This is where DBSCAN shines. DBSCAN is a density-based clustering algorithm that groups together points that are closely packed (dense regions) and marks points in low-density regions as outliers or noise.

DBSCAN requires two parameters: epsilon (the maximum distance between two points to be considered neighbors) and minPts (the minimum number of points required to form a dense region). The algorithm can discover clusters of arbitrary shapes, making it ideal for tasks like spatial data analysis, anomaly detection, or identifying patterns in noisy datasets.

One challenge with DBSCAN is choosing the right parameters, as performance can vary significantly depending on the settings. Nevertheless, students enrolled in an AI Course in Noida will benefit from learning DBSCAN, as it introduces them to more robust and flexible clustering methods that go beyond simple centroid models.

Mean Shift Clustering

Mean Shift is another powerful clustering algorithm based on the concept of density. Unlike K-Means, which requires specifying the number of clusters, Mean Shift automatically identifies the number of clusters by seeking dense areas in the feature space. It works by iteratively shifting each data point towards the nearest region of highest data density, eventually converging on the cluster centers.

Mean Shift is particularly useful when dealing with datasets where the number of clusters is unknown or where clusters have complex, non-spherical shapes. It is commonly applied in image processing, computer vision, and tracking problems.

However, Mean Shift can be computationally intensive, especially with high-dimensional data. Students in an AI Course in Noida will often explore Mean Shift alongside K-Means and DBSCAN to understand how different density-based approaches compare in terms of performance and flexibility.

Gaussian Mixture Models (GMM)

While K-Means assigns each point to a single cluster, Gaussian Mixture Models (GMM) take a probabilistic approach by assuming that the data is generated from a mixture of several Gaussian distributions (normal distributions). Each data point is assigned a probability of belonging to each cluster, allowing for softer, more flexible clustering.

GMM uses the Expectation-Maximization (EM) algorithm to estimate the parameters of the Gaussian components, iteratively updating the probabilities and the Gaussian parameters until convergence. GMM is especially powerful when the clusters overlap or have different shapes and sizes, situations where K-Means may fail.

Applications include speaker identification, image segmentation, and anomaly detection. For students enrolled in an AI Course in Noida, learning GMM provides a solid introduction to probabilistic models and soft clustering techniques, helping them understand scenarios where hard clustering falls short.

Spectral Clustering

Spectral clustering is an advanced technique that uses graph theory and linear algebra to partition data. The idea is to represent the dataset as a graph, where each node is a data point, and the edges represent the similarity between points. By computing the eigenvalues and eigenvectors of the graph’s Laplacian matrix, the algorithm identifies clusters based on the structure of the data.

Spectral clustering is particularly effective for non-convex clusters, complex geometric patterns, or when traditional methods like K-Means struggle. It is widely used in image segmentation, community detection in networks, and bioinformatics.

Students taking an AI Course in Noida will typically encounter spectral clustering in advanced modules or projects, where they learn how to apply it using Python libraries and interpret the results in terms of graph structures.

BIRCH (Balanced Iterative Reducing and Clustering Using Hierarchies)

BIRCH is a specialized clustering algorithm designed for large datasets. It builds a compact summary of the data called a CF (Clustering Feature) tree, which allows it to efficiently cluster massive datasets incrementally. BIRCH is particularly useful when memory or computational resources are limited.

While BIRCH is not as widely used as K-Means or DBSCAN, it provides an important tool for scaling clustering algorithms to big data applications. In an AI Course in Noida, students working on large-scale projects will benefit from understanding BIRCH and similar scalable approaches.

Applications of Clustering Algorithms in AI

The clustering algorithms we have discussed are not just theoretical exercises; they have powerful real-world applications. In marketing, clustering helps segment customers based on purchasing behavior, enabling personalized recommendations and targeted campaigns. In healthcare, clustering can identify patient groups with similar symptoms or genetic profiles, improving diagnosis and treatment. In computer vision, clustering aids in object recognition, image compression, and scene understanding.

Moreover, in AI applications such as natural language processing (NLP), clustering is used to group similar documents, detect topics, or improve search relevance. In cybersecurity, clustering can detect patterns of fraudulent activity or uncover suspicious behavior.

Students in an AI Course in Noida will gain hands-on experience applying clustering algorithms to diverse datasets, learning not just the theory but also the practical considerations, such as feature selection, scaling, parameter tuning, and evaluation.

Challenges and Considerations

While clustering is a powerful tool, it comes with challenges. One of the most common issues is determining the optimal number of clusters. Methods such as the elbow method, silhouette score, or gap statistic can help, but there is no universal answer.

Another challenge is scaling to high-dimensional data, where the concept of distance (used by many algorithms) becomes less meaningful — a problem known as the curse of dimensionality. Feature engineering, dimensionality reduction (such as PCA), or using algorithms designed for high-dimensional data can help.

Finally, clustering results can be sensitive to initialization, parameter settings, and the choice of distance or similarity measure. Students in an AI Course in Noida will learn to navigate these challenges by experimenting with different algorithms, visualizations, and validation techniques.

Conclusion

Clustering is one of the most fascinating and versatile techniques in machine learning, offering a gateway to exploring patterns in unlabeled data. From the simplicity of K-Means to the sophistication of spectral clustering, the clustering algorithms covered in this article provide a rich toolkit for any aspiring AI practitioner.

For students enrolled in an AI Course in Noida, mastering clustering algorithms is essential for both academic success and real-world impact. Whether you are segmenting customers, detecting fraud, analyzing images, or exploring social networks, clustering empowers you to uncover hidden structure and make data-driven decisions.

As you progress in your AI journey, remember that no single clustering method is perfect. The best approach depends on your data, your goals, and your willingness to experiment and learn. By building a solid foundation in clustering, you are setting yourself up for success in the exciting and ever-evolving world of artificial intelligence.