In the absence of such pipelines, data scientists and engineers would be overly occupied in manually gathering, preparing and compiling the data, and then only start with the actual analysis process. Data pipelines address this by making the process simpler and guaranteeing that data moves logically, efficiently, and effectively through varying phases of a project. This renders them a maximum constituent of contemporary data science projects, no matter the industry or magnitude.

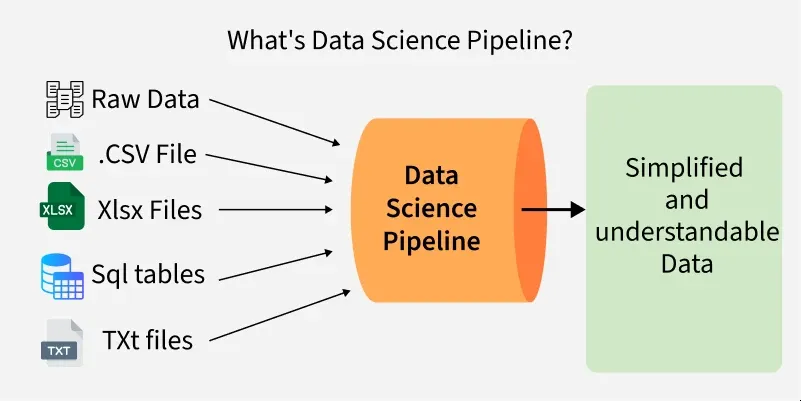

The pipeline in the world of data can be equated with the workings of a physical water pipeline. Like water pipelines transporting water between a source, e.g. a river, and a tap where consumption is possible, data pipelines transport raw data between a source, e.g. a database, API or logs, and a destination, to a data warehouse, dashboard, or machine learning model where it can be utilised. The path between is seldom linear; raw data are frequently in ugly, unstructured, or incompatible form. This information is automatically processed, cleaned, standardised, and structured to be able to analyse it, and a pipeline enables this to happen automatically. The analogy comes in handy to realise the significance of pipelines in reducing the risk of errors and rationalising a process that might prove time-wasting and unpredictable, otherwise.

The increased importance of the data pipelines can be explained by examining the immense complexity of data sources nowadays. A common business would record sales transactions, visitor interactions, web clicks, their activity in social media, IoT sensors, together with records of internal activity, all used at varying paces and in various formats. To unify such dissimilar data and make the data useful to pursue an analytical process would be an uphill task to achieve manually, especially on a large scale. Data pipelines approach this problem by combining the various sources, automating the data as it flows through, and transforming the data to make all the data fit the same structure. In the case of a data science project, this is especially pertinent since the quality and reliability of the data at the front end are the main determinants of whether any subsequent insights, models, or forecasts will ultimately be accurate or not.

One of the most important aspects of a data pipeline is that it is expected to execute data transformation. Raw data cannot always be applied as it is; it can comprise missing data, redundancies, outliers, or inconsistencies. As another instance, information about customers retrieved across two or more systems may capture the same individual in slightly different formats: In one system, a middle initial might or might not be present; in another system, an old address might be in use. Unless curbed, these discrepancies have the potential to manipulate findings. P pipelines are programmed to identify and rectify these problems, making the dataset credible before the analysis. This is commonly referred to as the ETL process or process, where the ETL is defined as: ETL = Extract, Transform, and Load. Extraction is the process of collecting the data it is in, transformation is cleaning and preparing it and loading is the process of moving it to where it belongs, which can be a data warehouse or analysis platform. The foundation of any data pipeline has always been ETL (Extract, Transform, Load), but in the real-time case, organisations can also employ ELT (Extract, Load, Transform) in order to accelerate processes.

The other aspect of the data pipelines is automation; it is one of the greatest contributions of data pipelines to data science. Teams have used to run batch processes in the past, which may involve a manual process as a human will need to execute a script or program at a time of new data builds up. It was a cumbersome method that could not be relied upon due to the possibility of human errors, even when a large amount of data was to be updated regularly. New pipelines, however, are built to operate autonomously, on a schedule, or a set of triggers, e.g. the arrival of new data. As an example, an e-commerce organisation might configure a pipeline that automates the process of updating its sales dashboards each hour using live information about the transactions. Not only does this solution enable time savings, but it also provides decision-makers with the most up-to-date insights at all time,s which is crucial in highly competitive sectors.

Another ideal benefit of working on data pipelines, in data science projects, is scalability. When business increases, the amount of data that the business deals with also increases. Managing terabytes or petabytes of information without a good pipeline would not be possible. An effective pipeline will scale to support growing quantities of and origins of data without becoming stalled or necessitating a thorough redesign. Cloud platforms and distributed systems like Apache Kafka, Apache Airflow, and Spark, have also allowed pipelines and organisations to analyse real-time streaming data at scale, allowing organisations to cost-effectively process large volumes of data. The extent of this scalability makes pipelines continue to be relevant instruments as the projects change and grow.

It is not only about the technical efficiency of the data pipelines. They also enhance cross teamwork in an organization. The ability to collaborate between data engineers, analysts, and data scientists is improved by trusting that the pipeline is providing high-quality data. Rather than wasting time and effort deliberating on the correctness of datasets, performing manual matching of records, data workers can work on more valuable tasks, developing predictive models, developing algorithms, or defining business insights. At some level, the act of pipelines develops a shared platform of truth around which the rest of the analyses and associated decisions can be built.

The practical application of the data pipeline shows its transfomative power in the real world. The pipes in the context of healthcare may bind the patient records with the laboratory results and wearable devices to deliver a comprehensive overview of the health of a patient in the near real-time to the doctor. Finance Finance Pipelines are capable of incorporating market information, trading history and customer purchases to identify patterns of fraud immediately. Using pipelines in the manufacturing sector, sensor data that gathering and analyzing the data in the machines can foresee future requirement of maintenance before a machine breaks down. In the simplest of consumer services, such as a ride-sharing app or a food delivery app, pipelines will result in the live data being continuously processed to bring the supply and the demand in balance, optimize routes, and enhance customer experience. These two examples demonstrate that pipelines should not be conceived of as abstract tools but as extraordinarily practical systems that precondition everyday business life and decisions.

One should also note, though, that the creation and development of data pipelines are not always easy. Engineering a pipeline is highly technical and must have a thorough comprehension of the data sources along with objectives of the project. Pipelines occasionally bust out because of some broken connections, or updated systems or unsuspected data formats that may cause delays or inaccuracy. Another serious problem is the security and privacy of data which is particularly problematic when considering confidential data like money data or health reports.

Nevertheless, the advantages of data pipelines significantly undermine their shortcomings, and, that is why it is impossible to imagine the data-driven world without them. They enable projects to move fast, precisely and consistently and it is these attributes that are vital when organizations find themselves in competition to draw insights much quicker than their rivals. To data scientists, pipelines can serve as an empowerment tool which relieves them of repetitive and time consuming analytics preparation work, leaving them more time and energy to perform true data analysis, experimentation, and innovation. Metaphorically, people can talk about the pipeline as the invisible spine of any successful data science project: it works in the background, yet defines the quality of everything behind it.

Looking into the future, the role that data pipelines play is only going to become more critical, as new technologies create more, and more complex datasets. As artificial intelligence, machine learning and Internet of Things gadgets increase, interfaces able to process real-time flow of data will be necessary exponentially. The evolution of pipelines will proceed by making pipelines smarter, more flexible and more integrated with cloud-native tools. The usage of such concepts as serverless architectures, machine learning-based data quality checks, or automated monitoring will also increase the efficiency of the pipelines. Along with scientists, pipeline architecture will be a necessity that must be understood and invested in by data science practitioners and organizations that intend to remain competitive.

To conclude, data pipelines are not just technology infrastructure, they are the lifeline of data science projects. They support reliability, consistency and scalability, enable collaboration and real time decision making by automating the data becoming available as raw data to generated insights.